Background

Cognitive neuroscience research on music cognition seeks to understand how musical experiences shape neural dynamics, emotion, and memory, particularly in cognitive decline associated with Alzheimer's disease and dementia.

Core Problem

Despite advances in EEG and behavioral methods, current systems lack the temporal precision required for real-time neural–musical synchronization, leading to fragmented interpretations of brain–sound dynamics.

Root Cause 1

Reliance on expert-annotated music analysis persists due to the absence of an integrated, fully automatic, real-time harmonic analysis system in computational music theory.

Root Cause 2

Structural music-theoretical analysis is largely excluded from health science research on music and memory.

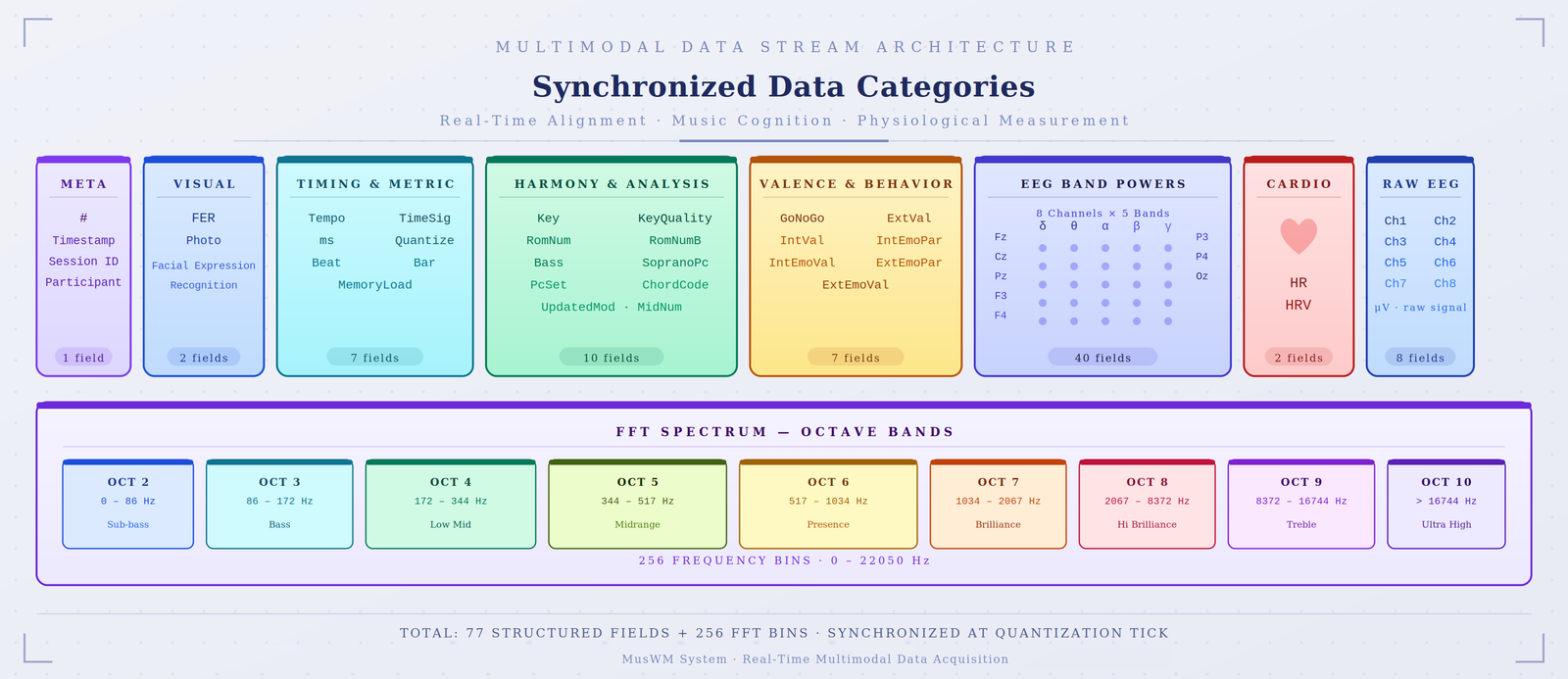

2A. System Architecture

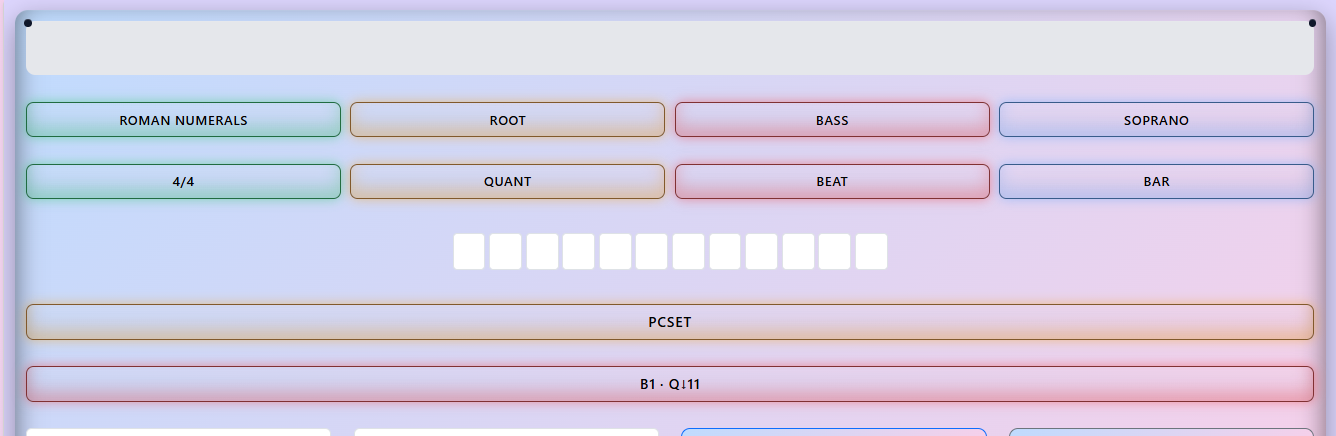

Python backend signal processing.

JavaScript real-time WebSocket layer.

Concurrent multi-stream data acquisition.

2B. Musical Processing ✦ Solves Root Cause 1

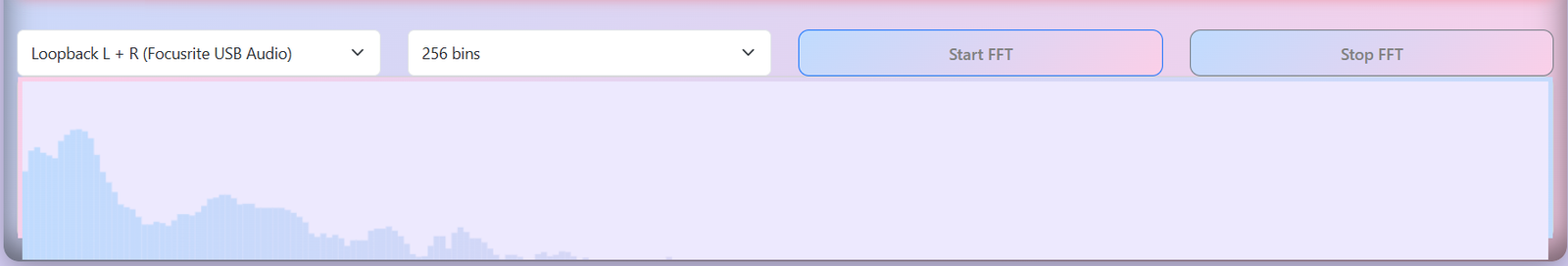

2C. Audio Processing

Browser-based Web Audio API.

2D. Behavioral Processing ✦ Real-Time Annotation

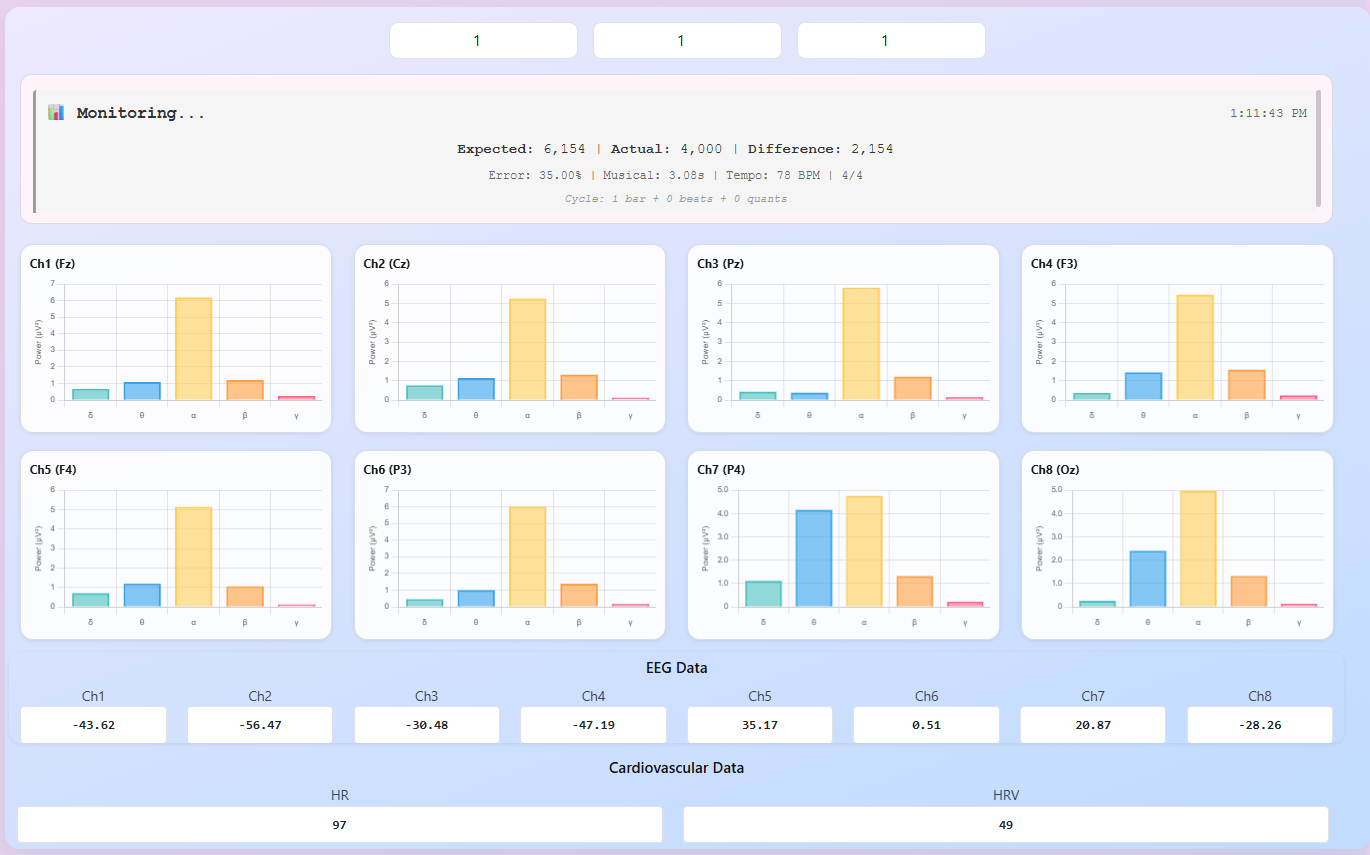

2E. Physiological Processing ✦ Solves Root Cause 2

RC1 resolved (2B): A fully automatic real-time harmonic analysis pipeline — using a 211-entry lookup tensor — emits Roman numeral tokens at millisecond precision without any human annotator or pre-labeled corpus. RC2 resolved (2E): Harmonic tokens are directly time-stamped and mapped onto EEG band powers, HR/HRV, and behavioral responses — synchronizing musical structure with physiological signals in a single exportable multimodal corpus row.

This tool provides a reproducible infrastructure for examining how harmonic features engage neural, cardiovascular and behavioral mechanisms of emotion and memory, supporting future applications in music therapy and translational research in cognitive neuroscience.